DeepSeek aI Launches Multimodal "Janus-Pro-7B" Model with Im…

페이지 정보

작성자 Shirley 작성일25-03-09 13:06 조회13회 댓글0건관련링크

본문

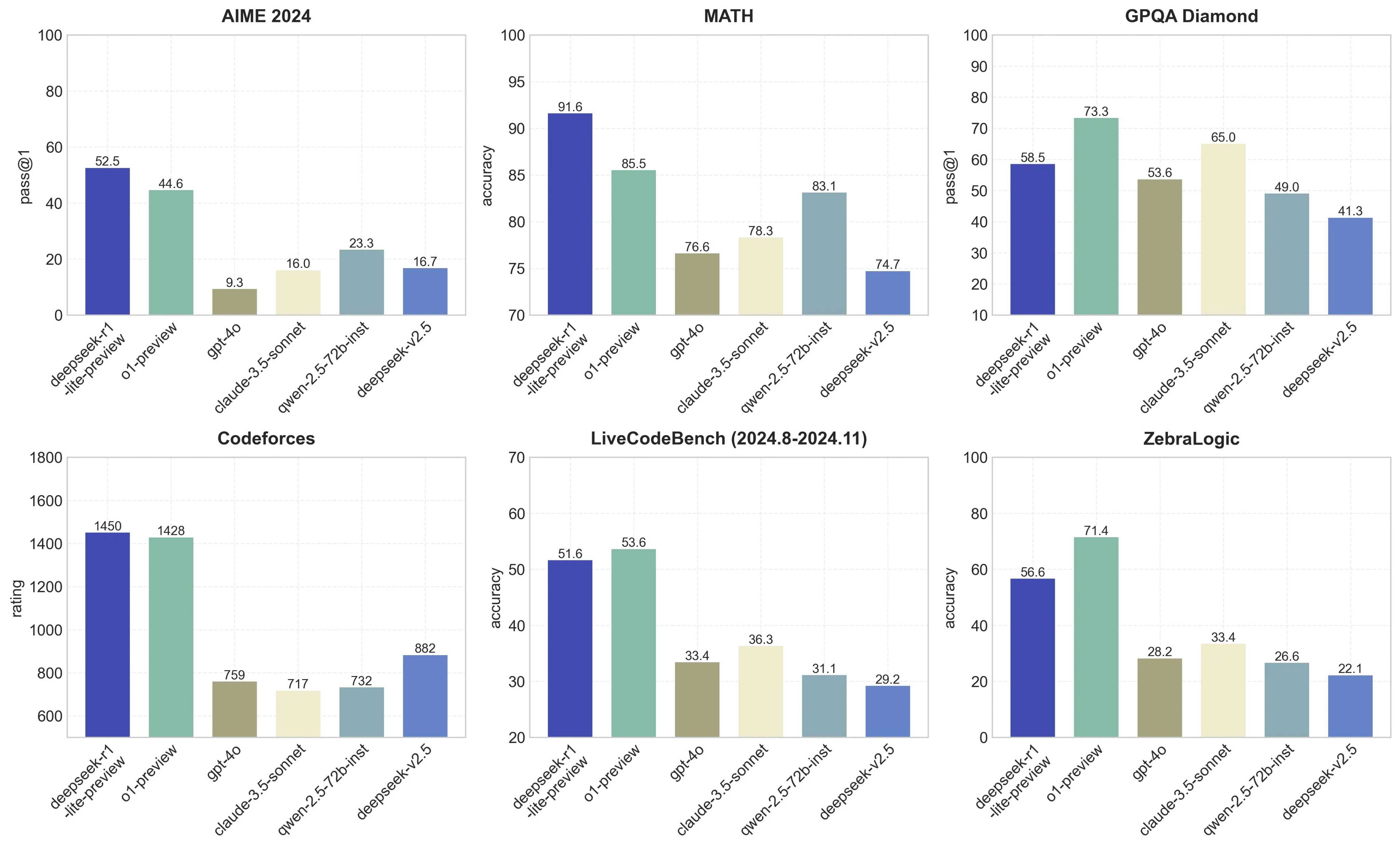

Open Models. In this undertaking, we used numerous proprietary frontier LLMs, similar to GPT-4o and Sonnet, but we additionally explored using open models like DeepSeek and Llama-3. DeepSeek Coder V2 has demonstrated distinctive performance throughout various benchmarks, often surpassing closed-source fashions like GPT-four Turbo, Claude 3 Opus, and Gemini 1.5 Pro in coding and math-particular duties. For example that is less steep than the original GPT-four to Claude 3.5 Sonnet inference value differential (10x), and 3.5 Sonnet is a greater mannequin than GPT-4. This replace introduces compressed latent vectors to spice up performance and reduce reminiscence utilization throughout inference. To make sure unbiased and thorough performance assessments, DeepSeek v3 AI designed new drawback sets, such because the Hungarian National High-School Exam and Google’s instruction following the evaluation dataset. 2. Train the mannequin using your dataset. Fix: Use stricter prompts (e.g., "Answer using solely the supplied context") or upgrade to larger models like 32B . However, users needs to be conscious of the moral concerns that include using such a robust and uncensored mannequin. However, DeepSeek-R1-Zero encounters challenges reminiscent of limitless repetition, poor readability, and language mixing. This in depth language support makes DeepSeek Coder V2 a versatile tool for builders working across varied platforms and technologies.

Open Models. In this undertaking, we used numerous proprietary frontier LLMs, similar to GPT-4o and Sonnet, but we additionally explored using open models like DeepSeek and Llama-3. DeepSeek Coder V2 has demonstrated distinctive performance throughout various benchmarks, often surpassing closed-source fashions like GPT-four Turbo, Claude 3 Opus, and Gemini 1.5 Pro in coding and math-particular duties. For example that is less steep than the original GPT-four to Claude 3.5 Sonnet inference value differential (10x), and 3.5 Sonnet is a greater mannequin than GPT-4. This replace introduces compressed latent vectors to spice up performance and reduce reminiscence utilization throughout inference. To make sure unbiased and thorough performance assessments, DeepSeek v3 AI designed new drawback sets, such because the Hungarian National High-School Exam and Google’s instruction following the evaluation dataset. 2. Train the mannequin using your dataset. Fix: Use stricter prompts (e.g., "Answer using solely the supplied context") or upgrade to larger models like 32B . However, users needs to be conscious of the moral concerns that include using such a robust and uncensored mannequin. However, DeepSeek-R1-Zero encounters challenges reminiscent of limitless repetition, poor readability, and language mixing. This in depth language support makes DeepSeek Coder V2 a versatile tool for builders working across varied platforms and technologies.

DeepSeek is a robust AI software designed to help with varied duties, from programming assistance to information evaluation. A basic use model that combines advanced analytics capabilities with a vast 13 billion parameter depend, enabling it to carry out in-depth information evaluation and help advanced resolution-making processes. Whether you’re constructing easy models or deploying superior AI options, DeepSeek gives the capabilities that you must succeed. With its spectacular capabilities and efficiency, DeepSeek Coder V2 is poised to become a recreation-changer for developers, researchers, and AI fanatics alike. Despite its excellent efficiency, DeepSeek-V3 requires solely 2.788M H800 GPU hours for its full training. Fix: Always present full file paths (e.g., /src/parts/Login.jsx) instead of obscure references . You get GPT-4-level smarts without the associated fee, full control over privateness, and a workflow that seems like pairing with a senior developer. For Code: Include express directions like "Use Python 3.11 and type hints" . An AI observer Rowan Cheung indicated that the brand new model outperforms opponents OpenAI’s DALL-E three and Stability AI’s Stable Diffusion on some benchmarks like GenEval and DPG-Bench. The mannequin helps an impressive 338 programming languages, a major enhance from the 86 languages supported by its predecessor.

DeepSeek is a robust AI software designed to help with varied duties, from programming assistance to information evaluation. A basic use model that combines advanced analytics capabilities with a vast 13 billion parameter depend, enabling it to carry out in-depth information evaluation and help advanced resolution-making processes. Whether you’re constructing easy models or deploying superior AI options, DeepSeek gives the capabilities that you must succeed. With its spectacular capabilities and efficiency, DeepSeek Coder V2 is poised to become a recreation-changer for developers, researchers, and AI fanatics alike. Despite its excellent efficiency, DeepSeek-V3 requires solely 2.788M H800 GPU hours for its full training. Fix: Always present full file paths (e.g., /src/parts/Login.jsx) instead of obscure references . You get GPT-4-level smarts without the associated fee, full control over privateness, and a workflow that seems like pairing with a senior developer. For Code: Include express directions like "Use Python 3.11 and type hints" . An AI observer Rowan Cheung indicated that the brand new model outperforms opponents OpenAI’s DALL-E three and Stability AI’s Stable Diffusion on some benchmarks like GenEval and DPG-Bench. The mannequin helps an impressive 338 programming languages, a major enhance from the 86 languages supported by its predecessor.

其支持的编程语言从 86 种扩展至 338 种,覆盖主流及小众语言,适应多样化开发需求。 Optimize your model’s efficiency by effective-tuning hyperparameters. This significant improvement highlights the efficacy of our RL algorithm in optimizing the model’s performance over time. Monitor Performance: Track latency and accuracy over time . Utilize pre-educated models to save lots of time and resources. As generative AI enters its second year, the dialog round giant models is shifting from consensus to differentiation, with the debate centered on perception versus skepticism. By making its models and training information publicly obtainable, the company encourages thorough scrutiny, allowing the neighborhood to establish and handle potential biases and moral points. Regular testing of every new app model helps enterprises and agencies determine and address security and privateness dangers that violate policy or exceed a suitable stage of threat. To deal with this challenge, we randomly cut up a sure proportion of such mixed tokens throughout training, which exposes the model to a wider array of particular circumstances and mitigates this bias. Collect, clear, and preprocess your knowledge to ensure it’s prepared for mannequin training.

DeepSeek Coder V2 is the results of an progressive training course of that builds upon the success of its predecessors. Critically, DeepSeekMoE also introduced new approaches to load-balancing and routing during training; traditionally MoE increased communications overhead in training in alternate for environment friendly inference, but DeepSeek’s approach made training more efficient as nicely. Some critics argue that DeepSeek has not introduced basically new methods however has simply refined existing ones. For many who choose a extra interactive experience, DeepSeek presents a web-primarily based chat interface the place you possibly can work together with DeepSeek Coder V2 straight. DeepSeek is a versatile and highly effective AI instrument that can considerably enhance your tasks. This stage of mathematical reasoning functionality makes DeepSeek Coder V2 an invaluable software for college kids, educators, and researchers in arithmetic and related fields. DeepSeek Coder V2 employs a Mixture-of-Experts (MoE) architecture, which permits for efficient scaling of mannequin capacity while retaining computational requirements manageable.

If you have any questions regarding wherever and also the way to employ deepseek français, it is possible to email us in our web-site.

댓글목록

등록된 댓글이 없습니다.