Ruthless Deepseek Ai Strategies Exploited

페이지 정보

작성자 Bertha 작성일25-03-05 07:22 조회5회 댓글0건관련링크

본문

The bot’s additionally been helped by continued public curiosity and a willingness among folks to attempt completely different makes use of and not abandon it after disappointing results. DeepSeek-R1, however, makes use of a technique called Mixture of Experts (MoE) to optimize its efficiency. To realize load balancing among different experts in the MoE half, we'd like to ensure that each GPU processes approximately the identical variety of tokens. By combining MoE and RL, DeepSeek-R1 has redefined how AI can suppose, cause, and remedy complicated challenges. You can check out the Free Deepseek Online chat model of these instruments. Users are dashing to check out the new chatbot, sending DeepSeek’s AI Assistant to the highest of the iPhone and Android app charts in many nations. " DeepSeek’s chatbot cited the Israel-Hamas ceasefire and linked to several Western news retailers corresponding to BBC News, but not all of the stories appeared to be relevant to the subject.

The bot’s additionally been helped by continued public curiosity and a willingness among folks to attempt completely different makes use of and not abandon it after disappointing results. DeepSeek-R1, however, makes use of a technique called Mixture of Experts (MoE) to optimize its efficiency. To realize load balancing among different experts in the MoE half, we'd like to ensure that each GPU processes approximately the identical variety of tokens. By combining MoE and RL, DeepSeek-R1 has redefined how AI can suppose, cause, and remedy complicated challenges. You can check out the Free Deepseek Online chat model of these instruments. Users are dashing to check out the new chatbot, sending DeepSeek’s AI Assistant to the highest of the iPhone and Android app charts in many nations. " DeepSeek’s chatbot cited the Israel-Hamas ceasefire and linked to several Western news retailers corresponding to BBC News, but not all of the stories appeared to be relevant to the subject.

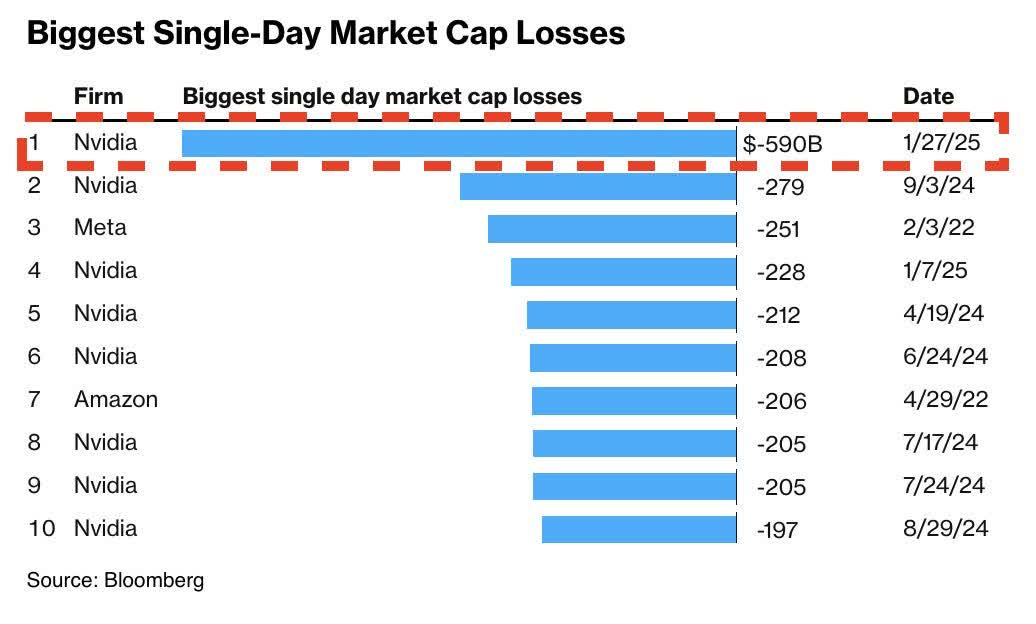

The revelation that DeepSeek's chatbot provides comparable efficiency to its US rival but was reportedly developed at a fraction of the cost "is inflicting panic inside US tech corporations and within the stock market", mentioned NBC News. All of that at a fraction of the cost of comparable fashions. Moreover, financially, Free DeepSeek-R1 presents substantial cost financial savings. DeepSeek-R1 achieves very excessive scores in lots of the Hugging Face exams, outperforming fashions like Claude-3.5, GPT-4o, and even some variants of OpenAI o1 (though not all). DeepSeek-R1 is not just another AI mannequin-it is a price-efficient, excessive-efficiency, and open-source different for researchers, businesses, and builders searching for superior AI reasoning capabilities. The findings reveal that RL empowers Deepseek free-R1-Zero to achieve strong reasoning capabilities with out the necessity for any supervised fantastic-tuning knowledge. Both are comprised of a pre-coaching stage (tons of information from the net) and a put up-coaching stage. Vision Transformers (ViT) are a category of fashions designed for picture recognition tasks.

The revelation that DeepSeek's chatbot provides comparable efficiency to its US rival but was reportedly developed at a fraction of the cost "is inflicting panic inside US tech corporations and within the stock market", mentioned NBC News. All of that at a fraction of the cost of comparable fashions. Moreover, financially, Free DeepSeek-R1 presents substantial cost financial savings. DeepSeek-R1 achieves very excessive scores in lots of the Hugging Face exams, outperforming fashions like Claude-3.5, GPT-4o, and even some variants of OpenAI o1 (though not all). DeepSeek-R1 is not just another AI mannequin-it is a price-efficient, excessive-efficiency, and open-source different for researchers, businesses, and builders searching for superior AI reasoning capabilities. The findings reveal that RL empowers Deepseek free-R1-Zero to achieve strong reasoning capabilities with out the necessity for any supervised fantastic-tuning knowledge. Both are comprised of a pre-coaching stage (tons of information from the net) and a put up-coaching stage. Vision Transformers (ViT) are a category of fashions designed for picture recognition tasks.

A comprehensive survey of giant language fashions and multimodal large language models in medication. Dozens of companies have committed to implementing DeepSeek or specific purposes of the AI massive language model since January, when the Hangzhou-primarily based app developer emerged as China’s low-value alternative to Western opponents reminiscent of ChatGPT. Already, others are replicating the excessive-efficiency, low-price training approach of DeepSeek. As far as we know, OpenAI has not tried this approach (they use a more difficult RL algorithm). It’s unambiguously hilarious that it’s a Chinese firm doing the work OpenAI was named to do. Fun times, robotics company founder Bernt Øivind Børnich claiming we're on the cusp of a publish-scarcity society where robots make something physical you need. No longer content material with the consolation of tried-and-true business fashions, they are making a daring pivot towards embracing threat and uncertainty. It is especially useful in industries akin to customer support, where it could actually automate interactions with clients, and content material advertising and marketing, the place it can help in creating participating and relevant content. No human can play chess like AlphaZero. I heard somebody say that AlphaZero was like the silicon reincarnation of former World Chess Champion, Mikhail Tal: daring, imaginative, and full of stunning sacrifices that somehow gained him so many video games.

Tristan Harris says we are not ready for a world the place 10 years of scientific research may be performed in a month. However, to make faster progress for this model, we opted to use commonplace tooling (Maven and OpenClover for Java, gotestsum for Go, and Symflower for consistent tooling and output), which we are able to then swap for higher solutions in the coming variations. Then, to make R1 better at reasoning, they added a layer of reinforcement studying (RL). Instead of showing Zero-type models thousands and thousands of examples of human language and human reasoning, why not teach them the essential guidelines of logic, deduction, induction, fallacies, cognitive biases, the scientific methodology, and normal philosophical inquiry and let them uncover higher ways of pondering than humans may never give you? AlphaGo Zero learned to play Go higher than AlphaGo but also weirder to human eyes. What if as an alternative of changing into extra human, Zero-type models get weirder as they get better? What if you might get significantly better outcomes on reasoning fashions by exhibiting them your entire internet after which telling them to figure out the way to think with easy RL, with out using SFT human information? But, what if it labored better? I look forward to working with Rep.

댓글목록

등록된 댓글이 없습니다.