How To Start out Deepseek With Less than $a hundred

페이지 정보

작성자 Gracie Shin 작성일25-03-03 13:49 조회10회 댓글0건관련링크

본문

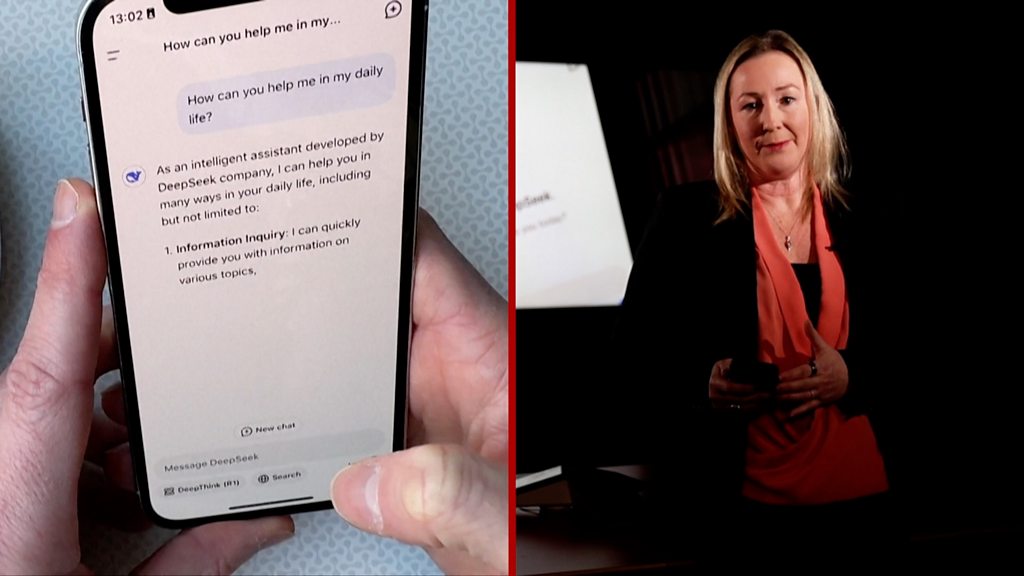

Deepseek Online chat - the quiet big leading China’s AI race - has been making headlines. He cautions that DeepSeek’s models don’t beat main closed reasoning models, like OpenAI’s o1, which may be preferable for probably the most difficult tasks. Despite that, DeepSeek V3 achieved benchmark scores that matched or beat OpenAI’s GPT-4o and Anthropic’s Claude 3.5 Sonnet. And DeepSeek-V3 isn’t the company’s only star; it additionally released a reasoning mannequin, DeepSeek-R1, with chain-of-thought reasoning like OpenAI’s o1. DeepSeek’s success towards bigger and more established rivals has been described as "upending AI" and "over-hyped." The company’s success was no less than partially answerable for inflicting Nvidia’s stock price to drop by 18% in January, and for eliciting a public response from OpenAI CEO Sam Altman. "Reproduction alone is relatively low-cost - primarily based on public papers and open-source code, minimal times of coaching, or even high-quality-tuning, suffices. However, this structured AI reasoning comes at the cost of longer inference instances.

Deepseek Online chat - the quiet big leading China’s AI race - has been making headlines. He cautions that DeepSeek’s models don’t beat main closed reasoning models, like OpenAI’s o1, which may be preferable for probably the most difficult tasks. Despite that, DeepSeek V3 achieved benchmark scores that matched or beat OpenAI’s GPT-4o and Anthropic’s Claude 3.5 Sonnet. And DeepSeek-V3 isn’t the company’s only star; it additionally released a reasoning mannequin, DeepSeek-R1, with chain-of-thought reasoning like OpenAI’s o1. DeepSeek’s success towards bigger and more established rivals has been described as "upending AI" and "over-hyped." The company’s success was no less than partially answerable for inflicting Nvidia’s stock price to drop by 18% in January, and for eliciting a public response from OpenAI CEO Sam Altman. "Reproduction alone is relatively low-cost - primarily based on public papers and open-source code, minimal times of coaching, or even high-quality-tuning, suffices. However, this structured AI reasoning comes at the cost of longer inference instances.

The compute price of regenerating DeepSeek’s dataset, which is required to reproduce the models, can even prove important. The complete coaching dataset, as properly because the code utilized in training, remains hidden. DeepSeek doesn’t disclose the datasets or coaching code used to train its models. Agentic AI applications may benefit from the capabilities of models reminiscent of DeepSeek-R1. For enterprise agentic AI, this translates to enhanced downside-fixing and determination-making across varied domains. Yes, DeepSeek AI can be built-in into web, cellular, and enterprise functions via APIs and open-supply fashions. The mannequin also uses a mixture-of-consultants (MoE) structure which includes many neural networks, the "experts," which might be activated independently. The system leverages a recurrent, transformer-based mostly neural community structure impressed by the successful use of Transformers in giant language fashions (LLMs). The result is the system needs to develop shortcuts/hacks to get round its constraints and stunning conduct emerges. To place it in super simple phrases, LLM is an AI system educated on a huge amount of knowledge and is used to grasp and help people in writing texts, code, and much more. Because every professional is smaller and extra specialized, much less memory is required to prepare the model, and compute prices are lower as soon as the model is deployed.

The compute price of regenerating DeepSeek’s dataset, which is required to reproduce the models, can even prove important. The complete coaching dataset, as properly because the code utilized in training, remains hidden. DeepSeek doesn’t disclose the datasets or coaching code used to train its models. Agentic AI applications may benefit from the capabilities of models reminiscent of DeepSeek-R1. For enterprise agentic AI, this translates to enhanced downside-fixing and determination-making across varied domains. Yes, DeepSeek AI can be built-in into web, cellular, and enterprise functions via APIs and open-supply fashions. The mannequin also uses a mixture-of-consultants (MoE) structure which includes many neural networks, the "experts," which might be activated independently. The system leverages a recurrent, transformer-based mostly neural community structure impressed by the successful use of Transformers in giant language fashions (LLMs). The result is the system needs to develop shortcuts/hacks to get round its constraints and stunning conduct emerges. To place it in super simple phrases, LLM is an AI system educated on a huge amount of knowledge and is used to grasp and help people in writing texts, code, and much more. Because every professional is smaller and extra specialized, much less memory is required to prepare the model, and compute prices are lower as soon as the model is deployed.

We're contributing to the open-source quantization methods facilitate the usage of HuggingFace Tokenizer. "Reinforcement learning is notoriously tricky, and small implementation variations can result in major performance gaps," says Elie Bakouch, an AI analysis engineer at HuggingFace. DeepSeek’s models are equally opaque, but HuggingFace is attempting to unravel the thriller. This method samples the model’s responses to prompts, that are then reviewed and labeled by people. It really works, but having humans evaluation and label the responses is time-consuming and expensive. We are having hassle retrieving the article content. And even in the event you don’t fully consider in transfer learning you should imagine that the models will get a lot better at having quasi "world models" inside them, sufficient to improve their performance quite dramatically. The distinctive performance of DeepSeek-R1 in benchmarks like AIME 2024, CodeForces, GPQA Diamond, MATH-500, MMLU, and SWE-Bench highlights its superior reasoning and mathematical and coding capabilities. This slows down performance and wastes computational assets, making them inefficient for high-throughput, truth-primarily based tasks where easier retrieval fashions would be simpler. DeepSeek-R1 breaks down advanced issues into multiple steps with chain-of-thought (CoT) reasoning, enabling it to sort out intricate questions with higher accuracy and depth. One developer noted, "The DeepSeek Chat AI coder chat has been a lifesaver for debugging complicated code!

But as ZDnet noted, in the background of all this are training costs that are orders of magnitude lower than for some competing models, as well as chips which aren't as highly effective because the chips that are on disposal for U.S. U.S.-allied countries. These are companies that face important authorized and financial threat if caught defying U.S. AI brokers are remodeling enterprise operations by automating processes, optimizing choice-making, and streamlining actions. No, they are the responsible ones, those who care sufficient to name for regulation; all the higher if considerations about imagined harms kneecap inevitable rivals. Better nonetheless, DeepSeek gives a number of smaller, extra efficient versions of its primary fashions, known as "distilled models." These have fewer parameters, making them simpler to run on less powerful devices. Most "open" models present only the model weights necessary to run or high quality-tune the mannequin. This was adopted by DeepSeek LLM, which aimed to compete with different major language fashions. But this approach led to points, like language mixing (the use of many languages in a single response), Deepseek free that made its responses tough to read. The result's DeepSeek-V3, a large language mannequin with 671 billion parameters.

In case you have any kind of questions about exactly where and also tips on how to employ deepseek français, you can call us on our page.

댓글목록

등록된 댓글이 없습니다.